Tech entrepreneur Elon Musk has never been shy about bold forecasts. From electric vehicles to reusable rockets, many of his predictions have shaped global industries. Now, he’s turning attention to artificial intelligence, suggesting that within 36 months, the cheapest place to develop and deploy AI could dramatically shift — potentially redefining how and where AI innovation happens.

His statement hints at a future where the economics of artificial intelligence undergo rapid transformation. Today, building and running advanced AI systems requires enormous computing power, massive data centers, and significant energy resources. Training large-scale AI models can cost millions of dollars, placing the technology largely in the hands of major corporations and well-funded startups.

But Musk believes this cost barrier won’t last.

The Cost of Intelligence

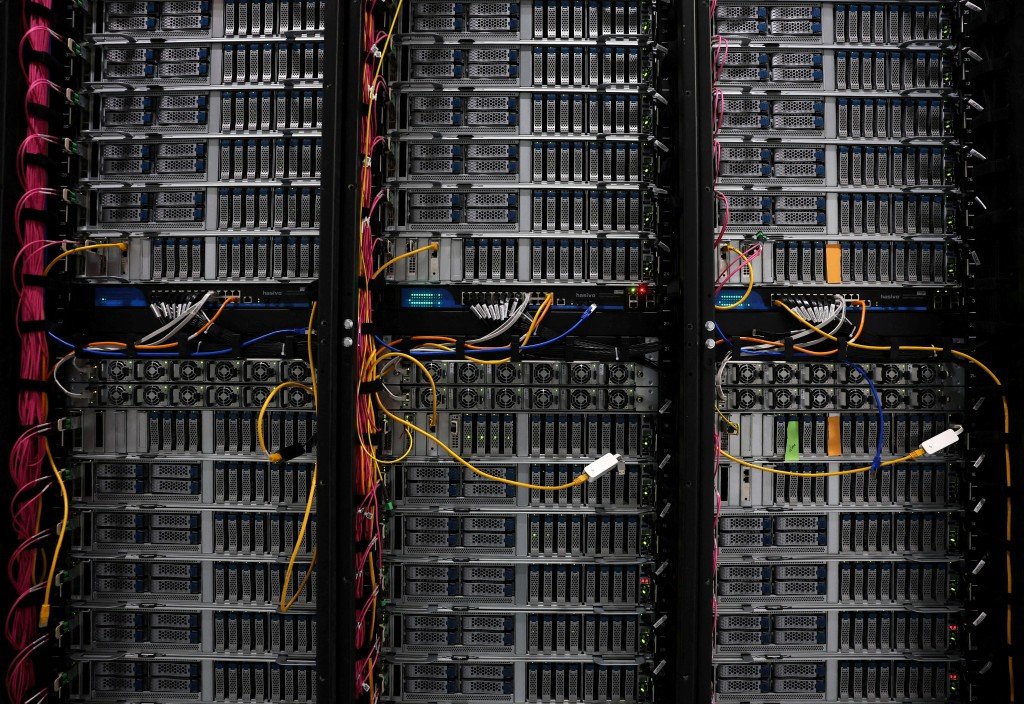

At the core of AI development lies compute — vast networks of high-performance chips working in parallel to process and learn from massive datasets. Companies invest heavily in specialized hardware, cooling systems, and infrastructure to support these operations.

Through ventures like xAI, Musk has already signaled his ambition to compete in the AI race. Building advanced supercomputing clusters requires not just capital but also access to efficient energy sources and supply chains for cutting-edge chips.

Currently, the most affordable AI development often depends on where electricity is cheapest, regulations are favorable, and infrastructure is readily available. Regions with abundant renewable energy or government incentives have become attractive hubs for data centers.

If Musk’s 36-month prediction proves accurate, we may see a geographic and economic shift in AI deployment — possibly toward areas that combine low energy costs with technological sophistication.

Energy as the Deciding Factor

One of the biggest operational costs for AI data centers is electricity. Training large models consumes staggering amounts of power. Musk, whose other ventures include Tesla and SpaceX, understands the intersection of energy innovation and technological advancement.

If breakthroughs in battery storage, renewable generation, or grid efficiency continue at their current pace, AI infrastructure could become dramatically cheaper to operate. Solar and wind energy, combined with large-scale storage systems, may reduce reliance on expensive fossil-fuel-based grids.

Cheaper energy means cheaper AI.

Some analysts speculate that Musk’s timeline may align with improvements in chip manufacturing and AI model optimization. As hardware becomes more efficient and algorithms require less compute to achieve higher performance, the overall cost per AI task could fall significantly.

Decentralization of AI Development

A drop in infrastructure costs would have profound implications. Today, AI leadership is concentrated among a handful of global tech giants. Lower barriers to entry could empower universities, startups, and even smaller nations to build competitive AI systems.

This democratization could spark rapid innovation. Instead of centralized mega-labs dominating research, we might see distributed ecosystems where AI experimentation flourishes worldwide.

At the same time, reduced costs may intensify competition. If it becomes inexpensive to train advanced models, the speed of AI advancement could accelerate dramatically, raising new ethical and regulatory questions.

Hardware Evolution and Efficiency

Another key factor behind Musk’s prediction may lie in hardware innovation. AI chips are evolving quickly, with manufacturers designing processors tailored specifically for neural networks. These specialized units deliver more performance per watt than traditional computing systems.

If manufacturing scales efficiently and supply constraints ease, chip prices could drop within the next few years. Combined with smarter software that requires fewer training cycles, AI development could become significantly more affordable.

Additionally, advancements in edge computing — where AI runs locally on devices instead of centralized servers — may further reduce reliance on massive data centers.

Implications for the Global AI Race

Musk’s 36-month timeline adds urgency to the global AI race. Governments and corporations are investing heavily to secure technological leadership. If the “cheapest place” to develop AI shifts, it could alter competitive advantages among nations.

Countries with abundant renewable resources, favorable regulatory frameworks, and strong technical talent pools may emerge as AI powerhouses.

However, lower costs also bring responsibility. As AI becomes more accessible, ensuring responsible deployment becomes critical. Safety frameworks, transparency, and governance must evolve alongside affordability.

A Bold Yet Plausible Forecast

Elon Musk has a history of ambitious timelines — some met, others extended. Yet the trajectory of AI costs suggests meaningful change is possible. Hardware efficiency, renewable energy expansion, and software optimization are advancing rapidly.

If the economics of AI shift within 36 months, the technology could move from being capital-intensive and centralized to widely accessible and globally distributed.

Whether Musk’s prediction materializes exactly as stated or unfolds more gradually, one thing is clear: the cost of intelligence is falling. And when intelligence becomes cheaper, its impact multiplies — reshaping industries, economies, and perhaps the very structure of innovation itself.